Abstract:

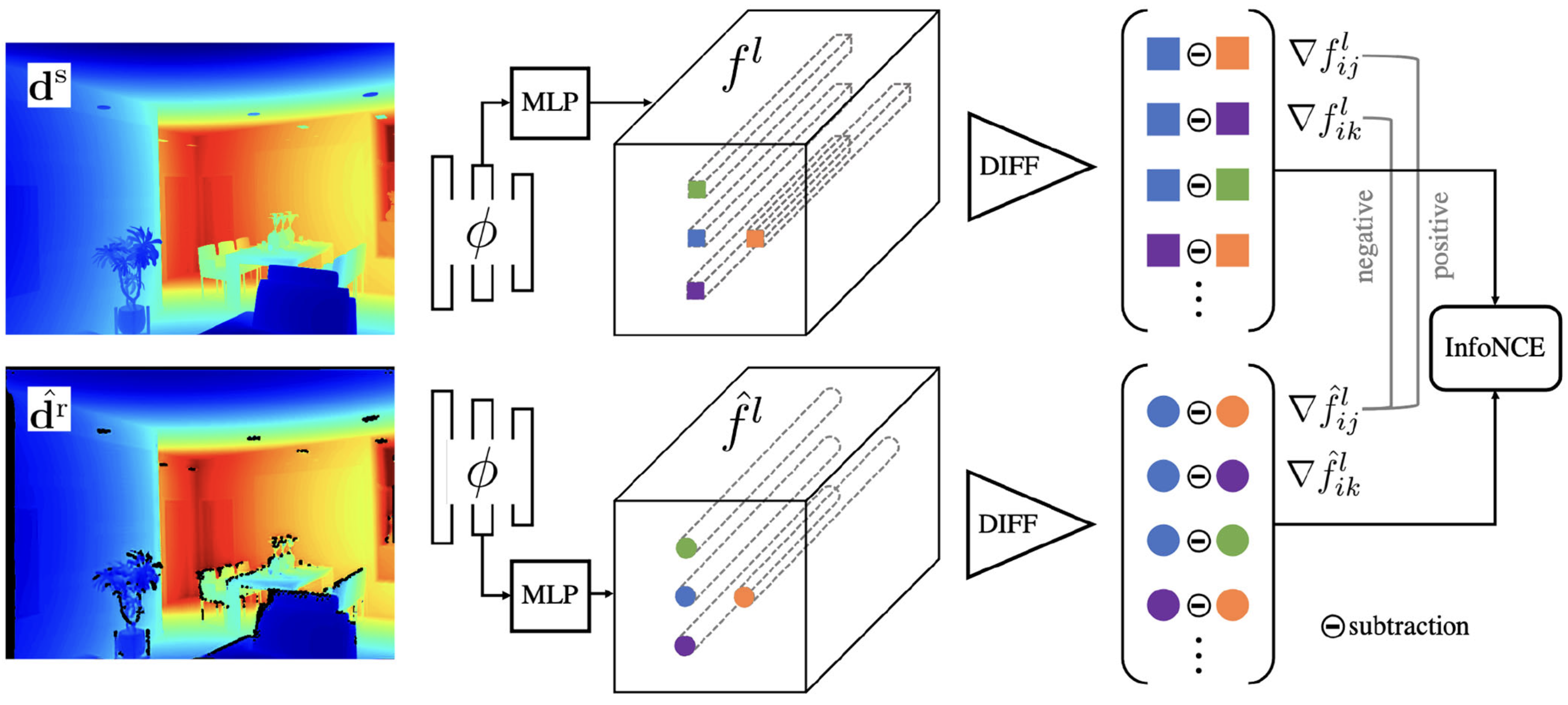

We describe a method for unpaired realistic depth synthesis that learns diverse variations from the real-world depth scans and ensures geometric consistency between the synthetic and synthesized depth. The synthesized realistic depth can then be used to train task-specific networks facilitating label transfer from the synthetic domain. Unlike existing image synthesis pipelines, where geometries are mostly ignored, we treat geometries carried by the depth scans based on their own existence. We propose differential contrastive learning that explicitly enforces the underlying geometric properties to be invariant regarding the real variations been learned. The resulting depth synthesis method is task-agnostic, and we demonstrate the effectiveness of the proposed synthesis method by extensive evaluations on real-world geometric reasoning tasks. The networks trained with the depth synthesized by our method consistently achieve better performance across a wide range of tasks than state of the art, and can even surpass the networks supervised with full real-world annotations when slightly fine-tuned, showing good transferability.

Bibtex:

@article{syzlg-ralicra-2022,

title={DCL: Differential Contrastive Learning for Geometry-Aware Depth Synthesis},

author={Shen, Yuefan and Yang, Yanchao and Zheng, Youyi and Liu, C Karen and Guibas, Leonidas J},

journal={IEEE Robotics and Automation Letters},

volume={7},

number={2},

pages={4845--4852},

year={2022},

publisher={IEEE}

}