Abstract:

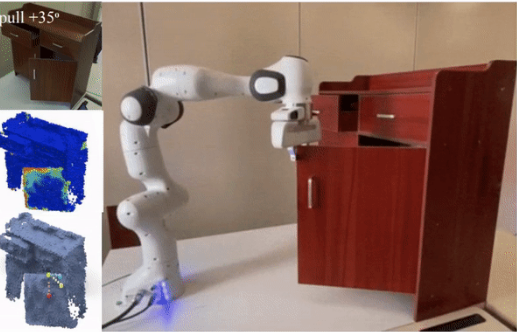

Perceiving and manipulating 3D articulated objects (e.g., cabinets, doors) in human environments is an important yet challenging task for future home-assistant robots. The space of 3D articulated objects is exceptionally rich in their myriad semantic categories, diverse shape geometry, and complicated part functionality. Previous works mostly abstract kinematic structure with estimated joint parameters and part poses as the visual representations for manipulating 3D articulated objects. In this paper, we propose object-centric actionable visual priors as a novel perception-interaction handshaking point that the perception system outputs more actionable guidance than kinematic structure estimation, by predicting dense geometry-aware, interaction-aware, and task-aware visual action affordance and trajectory proposals. We design an interaction-for-perception framework VATMART to learn such actionable visual representations by simultaneously training a curiosity-driven reinforcement learning policy exploring diverse interaction trajectories and a perception module summarizing and generalizing the explored knowledge for pointwise predictions among diverse shapes. Experiments prove the effectiveness of the proposed approach using the large-scale PartNet-Mobility dataset in SAPIEN environment and show promising generalization capabilities to novel test shapes, unseen object categories, and real-world data.

Bibtex:

@InProceedings{wu2022vatmart,

title = {{VAT-Mart}: Learning Visual Action Trajectory Proposals for Manipulating 3D ARTiculated Objects},

author = {Wu, Ruihai and Zhao, Yan and Mo, Kaichun and Guo, Zizheng and Wang, Yian and Wu, Tianhao and Fan, Qingnan and Chen, Xuelin and Guibas, Leonidas and Dong, Hao},

booktitle = {International Conference on Learning Representations (ICLR)},

year = {2022}

}