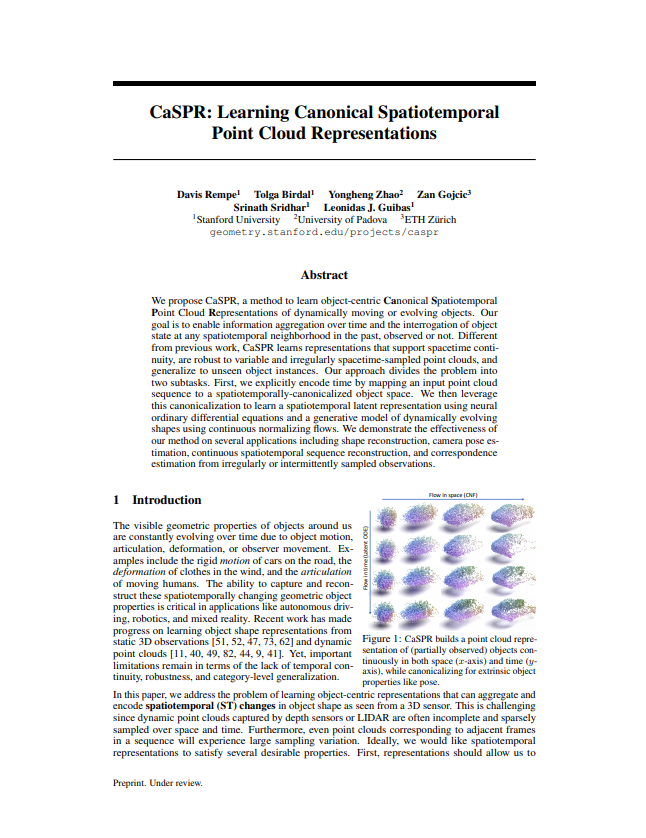

CaSPR: Learning Canonical SpatiotemporalPoint Cloud Representations

Abstract

We propose CaSPR, a method to learn object-centric Canonical Spatiotemporal Point cloud Representations of dynamically moving or evolving objects. Our goal is to enable information aggregation over time and the interrogation of object state at any spatiotemporal neighborhood in the past, observed or not. Different from previous work, CaSPR learns representations that support spacetime continuity, are robust to variable and irregularly spacetime-sampled point clouds, and generalize to unseen object instances. Our approach divides the problem into two subtasks. First, we explicitly encode time by mapping an input point cloud sequence to a spatiotemporally-canonicalized object space. We then leverage this canonicalization to learn a spatiotemporal latent representation using neural ordinary differential equations and a generative model of dynamically evolving shapes using continuous normalizing flows. We demonstrate the effectiveness of our method on several applications including shape reconstruction, camera pose estimation, continuous spatiotemporal sequence reconstruction, and correspondence estimation from irregularly or intermittently sampled observations.

Downloads

Links

Citation

@inproceedings{rempe2020caspr,

author={Rempe, Davis and Birdal, Tolga and Zhao, Yongheng and Gojcic, Zan and Sridhar, Srinath and Guibas, Leonidas J.},

title={CaSPR: Learning Canonical Spatiotemporal Point Cloud Representations},

booktitle={Advances in Neural Information Processing Systems (NeurIPS)},

year={2020}

}

Acknowledgments

This work was supported by grants from the Stanford-Ford Alliance, the SAIL-Toyota Center for AI Research, the Samsung GRO program, the AWS Machine Learning Awards Program, NSF grant IIS-1763268, and a Vannevar Bush Faculty Fellowship. The authors thank Michael Niemeyer for providing the code and shape models used to generate the warping cars dataset. Toyota Research Institute ("TRI") provided funds to assist the authors with their research but this article solely reflects the opinions and conclusions of its authors and not TRI or any other Toyota entity.